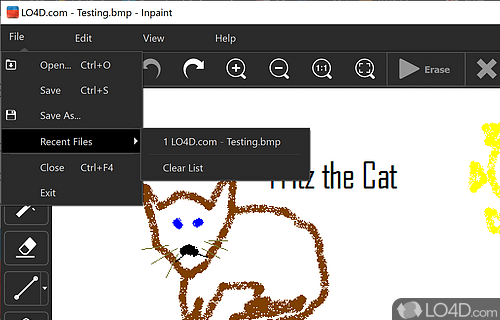

Using the model to generate content that is cruel to individuals is a misuse of this model. The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model. This includes generating images that people would foreseeably find disturbing, distressing, or offensive or content that propagates historical or current stereotypes. The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. Note: This section is taken from the DALLE-MINI model card, but applies in the same way to Stable Diffusion v1. Misuse, Malicious Use, and Out-of-Scope Use Applications in educational or creative tools.Generation of artworks and use in design and other artistic processes.Probing and understanding the limitations and biases of generative models.Safe deployment of models which have the potential to generate harmful content.The model is intended for research purposes only. Resources for more information: GitHub Repository, Paper.Ĭite as: = , It is a Latent Diffusion Model that uses a fixed, pretrained text encoder ( CLIP ViT-L/14) as suggested in the Imagen paper. Model Description: This is a model that can be used to generate and modify images based on text prompts. See also the article about the BLOOM Open RAIL license on which our license is based. License: The CreativeML OpenRAIL M license is an Open RAIL M license, adapted from the work that BigScience and the RAIL Initiative are jointly carrying in the area of responsible AI licensing. Model type: Diffusion-based text-to-image generation model uses more VRAM - suitable for fine-tuningĭeveloped by: Robin Rombach, Patrick Esser v1-5-pruned.ckpt - 7.7GB, ema+non-ema weights.v1-5-pruned-emaonly.ckpt - 4.27GB, ema-only weight.Prompt = "a photo of an astronaut riding a horse on mars"įor more detailed instructions, use-cases and examples in JAX follow the instructions here Pipe = om_pretrained(model_id, torch_dtype=torch.float16) Model_id = "runwayml/stable-diffusion-v1-5" You can use this both with the □Diffusers library and the RunwayML GitHub repository.įrom diffusers import StableDiffusionPipeline The Stable-Diffusion-v1-5 checkpoint was initialized with the weights of the Stable-Diffusion-v1-2Ĭheckpoint and subsequently fine-tuned on 595k steps at resolution 512x512 on "laion-aesthetics v2 5+" and 10% dropping of the text-conditioning to improve classifier-free guidance sampling. Restart GIMP so the graphic editor recognizes the new files.Stable Diffusion is a latent text-to-image diffusion model capable of generating photo-realistic images given any text input.įor more information about how Stable Diffusion functions, please have a look at □'s Stable Diffusion blog. You can see the path for my specific portable installation of GIMP. To locate the plug-in folder, launch GIMP. After you download the ZIP file, you can copy all the Python files and paste them in GIMP's plug-in folder. It is available for both Windows and Linux. The plug-in we are going to use is called Resynthesizer. It is just such a plug-in we are going to turn to for removing timestamps from our photos. Just like many programs today, the graphic editor can also be extended with special GIMP plug-ins. Or if you want to do it faster, here are some easy GIMP video tutorials for a beginner. Here are five websites to learn a bit more about GIMP and get comfortable with the program. GIMP (The GNU Image Manipulation Program) is the open source alternative to Photoshop and those of us who aren't moneybags it also translates to – free. If you haven't been introduced to GIMP yet, then it's high time.

The Power of Photoshop in a Free Package – GIMP

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed